ROI Analysis - Threat Modeling Tool

Overview

COMPANY

Analog Devices Inc.

MY ROLE

UX Researcher

RESEARCH METHODS

User Interviews

TOOLS

Miro, Microsoft Forms

** My case study is based on an internal process and I can’t share certain details or visuals related to it.**

BACKGROUND

After product teams complete an internal security assessment for product development in the Product Life Environment website, the next crucial step they need to follow as part of the product development process is to create a Threat Model. Threat modeling is the process of identifying any security threats that may affect the product a team is developing. Once product teams know what the threats are, they can know how to mitigate those risks. This is encouraged to be completed before the implementation phase.

The Product Security Assurance (PSA) team currently uses a third party tool to assist product teams with the threat modeling process. This tool helps teams see what security requirements they need to implement in their products, while also providing them traceability for those requirements.

The PSA team approached me to conduct a Return on Investment (ROI) analysis of the third party tool.

APPROACH

I conducted several individual user interviews across the span of two weeks and spoke to engineers from both software and hardware product teams to determine the value of the tool. I compared the insights from each team to understand how valuable the tool was to them.

RESEARCH GOAL

Define the value of the third party tool for software and hardware product teams alike.

Determine whether the PSA team should renew licenses for the tool or find a new threat modeling tool to invest in.

USER INTERVIEWS

INITIAL ASSUMPTIONS

Software teams found more value than Hardware teams in using the tool.

The tool enabled teams to create artifacts / traceability easier (traceability).

Teams used the tool to expand their knowledge on topics (training).

The tool was easy to use and setup (scalability).

The tool was introduced at the appropriate stage in the development process (compliance with process).

PROCEDURE

I conducted 6 individual user interviews to determine the true value of the tool and whether it was worth it to continue investing in the tool. All the user interviews were conducted on Microsoft Teams.

I spoke to an engineer from each product team, 3 of which were part of software teams and 3 were part of hardware teams. These interviews were not done in groups because it was important to get an insight of each user's varying experiences with the tool and how it impacted their own projects.

OBSERVATIONS

DEFINING THE TOOL’S VALUE

The initial assumption was that hardware teams find the tool less valuable because the tool is not designed to support hardware products. After talking to users from hardware teams, this was a reoccurring concern that they expressed.

There was a survey that the teams had to complete when they first used the tool, and the results generated a list of security measures they needed to implement in their product. The questions were geared towards software products, and some hardware teams felt that there could have been more options in there for hardware/silicon products.

About 66% of users from the hardware team felt that using the tool didn't have a major impact on the architecture of their product— compared to software teams— because they had already implemented most of their security features for government compliance. They used the tool to make sure that none of the requirements were missing. One hardware team reported that the tool did highlight some helpful testing requirements to secure their product. What these hardware teams did appreciate, though, was that the tool made it easier for them to conduct a threat assessment because they didn't have to manually create a list of the security features they needed to take during their product development process.

Software teams, however, felt that the tool was very helpful because it helped them understand what security requirements they needed to implement into their products. They all felt that the list captured security measures that their teams wouldn't have thought of implementing before using this tool. With the help of the list of requirements, software teams were able to mitigate any potential risks that their software might have before they moved onto the implementation phase.

FEATURES

The two main features that the teams mentioned using were the list of requirements for security measures to be implemented and the integration of third-party software such as Jira.

Half of the teams I interviewed had integrated Jira with the tool while doing their threat assessments as a way to keep track of the requirements they had to complete. None of the teams generated any reports or used the tool to create any artifacts.

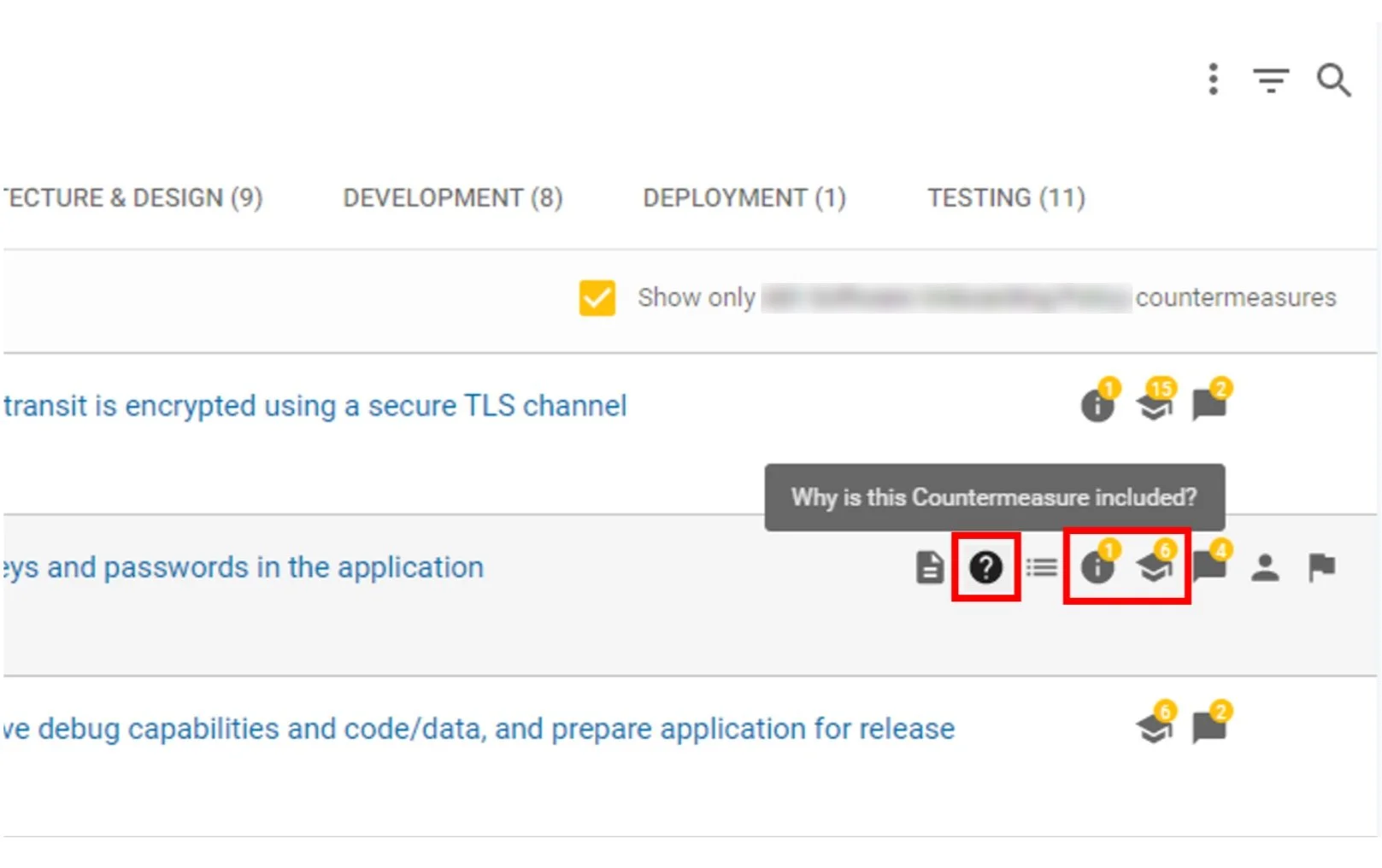

Another feature that some teams liked about the tool were the different kinds of help features. Next to each requirement, there were a few features that were meant to help the users understand what kind of security measures they needed to implement into their product. These features were represented in the form of icons, some of which are highlighted below.

The highlighted icons appeared whenever users hovered over any of the requirements on their list. The "?" icon explained why the specific countermeasure was included, the "i" icon provided them detailed information with how they could implement the countermeasure, and the "graduation cap" icon indicated that they could take training to learn more about any of the security measures listed there.

Some product teams used the help and info feature to get a better understanding of what kind of security measures they needed to implement into their products, but neither of the teams reported using the training feature.

SCALABILITY

All of the teams worked with the PSA team very frequently while they used the tool and completed their threat assessments. Aside from doing the initial set up for all 6 product teams, the team also helped these engineers navigate through the tool and helped them complete the survey.

None of the users were required to complete any additional set up for the tool. With the PSA team's guidance, all of the teams felt that they were able to accurately answer the survey questions and therefore, get a thorough list of security requirements.

COMPLIANCE WITH OUR INTERNAL PRODUCT DEVELOPMENT PROCESS

Each team understood that doing their threat assessments was an important part of our internal product development process before they even began using the tool. 50% of the teams felt that they had enough knowledge about their products to be able to successfully answer the questions for the initial survey. The other half who felt that they didn't know enough, did go back and revisit the list of requirements to make sure that they had implemented all the security features that they were required to.

ROI

The price for 20 licenses for the tool was $70k in 2022. With the assumption that the burdened salary of an employee was calculated to be $150/hr, the true monetary value of the tool was determined by dividing the cost per license by that assumed employee salary. This means that if a team spent less than 23 hours to complete their threat modeling and to go through our internal product development process, then using the tool was more expensive. In this case, the teams that were interviewed reported that they saved 40-80 hours of work on average by using the tool, so investing in the tool was valuable.

Some of the concerns that the PSA team had with the tool matched the concerns that some hardware teams had. It was less valuable for those teams because of the lack of hardware/silicon specific security requirements, but the vendor reported that they were working on implementing more hardware-based threats. One benefit of using this tool was that other threat modeling tools on the market did not meet some important features. With some additional research, I discovered that none of the other tools offered a survey that users could take to help generate a list of threats to be mitigated. Additionally, this specific tool took users through the entire Software Development Life Cycle, something that was missing amongst other third party tools.

I determined that the PSA team should renew their licenses for SD Elements and continue encouraging product teams to use the tool to complete their threat assessments. Using the tool helped save time for the PSA team and product teams because it simplified the threat modeling process significantly, and it also saved the PSA team an X amount of money each year.